Codehouse: my digital life

-

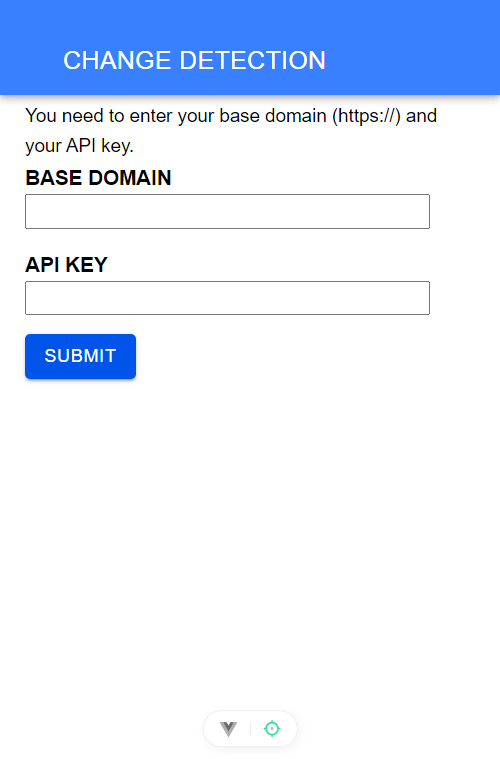

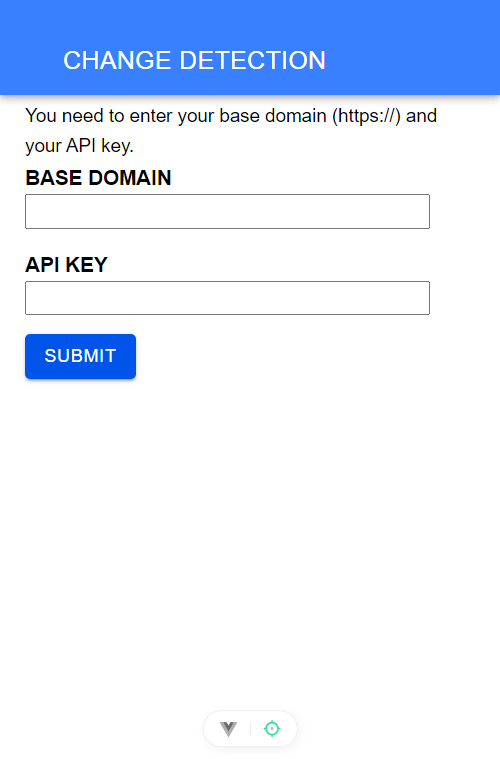

ChangeDetection Android App

Changedetection.io detects changes in the pages that you frequently visit. It is opensource and you can host in a server of your own. It has its own API in which I based these small prototype Android app.

-

How to install the most recent Python in your Synology diskstation

How to obtain the most recent version of Python on your Synology NAS.

-

You trust in AI blindly ? Think again…

I\’ve decided to write my own DHCP server, for a variety of reasons: every time the DHCP of my router have to be resetted, I have to fill again, bit by bit, the manual static entries of every network component. In other words, having a server of my own making, I can more easily move……

-

ChangeDetection Android App

Changedetection.io detects changes in the pages that you frequently visit. It is opensource and you can host in a server of your own. It has its own API in which I based these small prototype Android app.

-

How to install the most recent Python in your Synology diskstation

How to obtain the most recent version of Python on your Synology NAS.

-

You trust in AI blindly ? Think again…

I\’ve decided to write my own DHCP server, for a variety of reasons: every time the DHCP of my router have to be resetted, I have to fill again, bit by bit, the manual static entries of every network component. In other words, having a server of my own making, I can more easily move……

-

Creating a minimal python development environment under Windows

This article explains how to get a minimal running Python environment under Windows. After exploring several alternatives, like using micromamba script which is based in anaconda, I went on researching to obtain a stripped version of Python to use as a stub for running non compiled code under Windows. Python.org has the embedded Python distribution……

-

Synchronize a remote Dropbox directory with a local one in Python

This is the reciprocal of the latter post, in this case the remote changes are cloned locally.

-

Creating a real-time local to cloud synchronizer in Python

This small program allows to propagate the changes in the local system in real time to a remote cloud storage in Dropbox. It’s using Python.

-

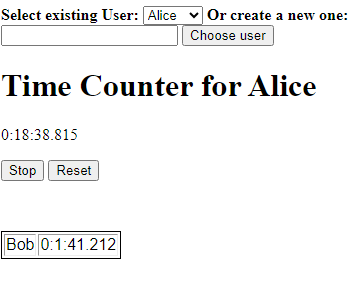

Meeting TimeCounter

Allows you to manage your meeting participation spent time through a simple web application.

-

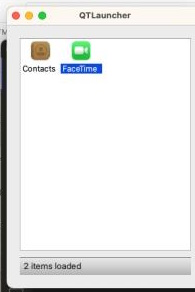

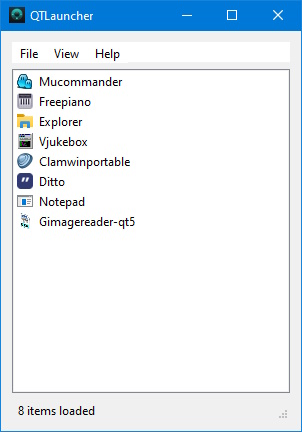

Select Select the best GUI toolkit – And we have a winner !

After trying seven frameworks, I finally came across the most useful of them all.

-

Scrobbling from Shazam on Android via Tasker (…and PHP)

Usually I rely for scrobbling my musical listenings on my Android Phone that the Player Apps have its own scrobbling capabality built-in. And for the songs you don’t have on it, you can use music recognizers like Shazam. But how can you send your shazamed songs to Last.FM ?

-

Select the best GUI toolkit – part 7: pyQt

And finally we reach the end… that is the last of the GUI framework I decided to experiment… pyQt. PyQt was developed by the Qt Company and is the base for the KDE, one of the desktop environments used by Linux